[ad_1]

Sunnyvale, Calif., AI supercomputer agency Cerebras says its subsequent era of waferscale AI chips can do double the efficiency of the earlier era whereas consuming the identical quantity of energy. The Wafer Scale Engine 3 (WSE-3) comprises 4 trillion transistors, a greater than 50 p.c improve over the earlier era because of using newer chipmaking expertise. The corporate says it’s going to use the WSE-3 in a brand new era of AI computer systems, which are actually being put in in a datacenter in Dallas to type a supercomputer able to 8 exaflops (8 billion billion floating level operations per second). Individually, Cerebras has entered right into a joint improvement settlement with Qualcomm that goals to spice up a metric of worth and efficiency for AI inference 10-fold.

The corporate says the CS-3 can prepare neural community fashions as much as 24-trillion parameters in measurement, greater than 10 occasions the scale of as we speak’s largest LLMs.

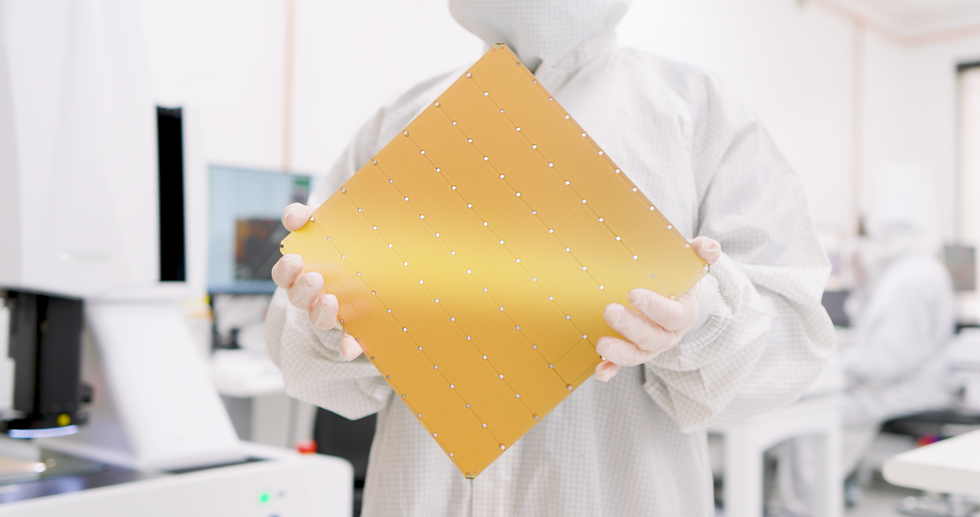

With WSE-3, Cerebras can hold its declare to producing the most important single chip on the planet. Sq.-shaped with 21.5 centimeters to a aspect, it makes use of practically a whole 300-millimeter wafer of silicon to make one chip. Chipmaking tools is usually restricted to producing silicon dies of not more than about 800 sq. millimeters. Chipmakers have begun to flee that restrict through the use of 3D integration and different superior packaging expertise3D integration and different superior packaging expertise to mix a number of dies. However even in these techniques, the transistor rely is within the tens of billions.

As ordinary, such a big chip comes with some mind-blowing superlatives.

| Transistors | 4 trillion |

| Sq. millimeters of silicon | 46,225 |

| AI cores | 900,000 |

| AI compute | 125 petaflops |

| On chip reminiscence | 44 gigabytes |

| Reminiscence bandwidth | 21 petabytes |

| Community material bandwidth | 214 petabits |

You may see the impact of Moore’s Regulation within the succession of WSE chips. The primary, debuting in 2019, was made utilizing TSMC’s 16-nanometer tech. For WSE-2, which arrived in 2021, Cerebras moved on to TSMC’s 7-nm course of. WSE-3 is constructed with the foundry large’s 5-nm tech.

The variety of transistors has greater than tripled since that first megachip. In the meantime, what they’re getting used for has additionally modified. For instance, the variety of AI cores on the chip has considerably leveled off, as has the quantity of reminiscence and the inner bandwidth. Nonetheless, the advance in efficiency by way of floating-point operations per second (flops) has outpaced all different measures.

CS-3 and the Condor Galaxy 3

The pc constructed across the new AI chip, the CS-3, is designed to coach new generations of large massive language fashions, 10 occasions bigger than OpenAI’s GPT-4 and Google’s Gemini. The corporate says the CS-3 can prepare neural community fashions as much as 24-trillion parameters in measurement, greater than 10 occasions the scale of as we speak’s largest LLMs, with out resorting to a set of software program tips wanted by different computer systems. In response to Cerebras, meaning the software program wanted to coach a one-trillion parameter mannequin on the CS-3 is as simple as coaching a one billion parameter mannequin on GPUs.

As many as 2,048 techniques might be mixed, a configuration that might chew by way of coaching the favored LLM Llama 70B from scratch in simply in the future. Nothing fairly that large is within the works, although, the corporate says. The primary CS-3-based supercomputer, Condor Galaxy 3 in Dallas, will probably be made up of 64 CS-3s. As with its CS-2-based sibling techniques, Abu Dhabi’s G42 owns the system. Along with Condor Galaxy 1 and a couple of, that makes a community of 16 exaflops.

“The present Condor Galaxy community has educated among the main open-source fashions within the business, with tens of 1000’s of downloads,” stated Kiril Evtimov, group CTO of G42 in a press launch. “By doubling the capability to 16 exaflops, we stay up for seeing the following wave of innovation Condor Galaxy supercomputers can allow.”

A Deal With Qualcomm

Whereas Cerebras computer systems are constructed for coaching, Cerebras CEO Andrew Feldman says it’s inference, the execution of neural community fashions, that’s the actual restrict to AI’s adoption. In response to Cerebras estimates, if each particular person on the planet used ChatGPT, it might price US $1 trillion yearly—to not point out an amazing quantity of fossil-fueled power. (Working prices are proportional to the scale of neural community mannequin and the variety of customers.)

So Cerebras and Qualcomm have fashioned a partnership with the purpose of bringing the price of inference down by an element of 10. Cerebras says their resolution will contain making use of neural networks methods reminiscent of weight information compression and sparsity—the pruning of unneeded connections. The Cerebras-trained networks would then run effectively on Qualcomm’s new inference chip, the AI 100 Extremely, the corporate says.

From Your Website Articles

Associated Articles Across the Net

[ad_2]